Cognitive Psychology Research Methods

The methods used by cognitive psychologists have been developed to experimentally tease apart mental operations. At the onset, it should be noted that cognitive psychologists rely most heavily on the experimental method, in which independent variables are manipulated and dependent variables are measured to provide insights into the cognitive architecture. In order to statistically evaluate the results from such experiments, cognitive researchers rely on standard hypothesis testing, along with inferential statistics (e.g. analyses of variance) to provide estimates of the likelihood of a particular pattern of results occurring if they were occurring only by chance.

The methods used by cognitive psychologists have been developed to experimentally tease apart mental operations. At the onset, it should be noted that cognitive psychologists rely most heavily on the experimental method, in which independent variables are manipulated and dependent variables are measured to provide insights into the cognitive architecture. In order to statistically evaluate the results from such experiments, cognitive researchers rely on standard hypothesis testing, along with inferential statistics (e.g. analyses of variance) to provide estimates of the likelihood of a particular pattern of results occurring if they were occurring only by chance.

The methodological tools that cognitive psychologists use depend in large part upon the area of study. Thus, we provide an overview of the methods used in a number of distinct areas including perception, memory, attention, and language processing, along with some discussion of methods that cut across these areas.

Perceptual Methods

During the initial stage of stimulus processing, an individual encodes/perceives the stimulus. Encoding can be viewed as the process of translating the sensory energy of a stimulus into a meaningful pattern. However, before a stimulus can be encoded, a minimum or threshold amount of sensory energy is required to detect that stimulus. In psychophysics, the method of limits and the method of constant stimuli have been used to determine sensory thresholds. The method of limits converges on sensory thresholds by using sub- and suprathreshold intensities of stimuli. From these anchor points, the intensity of a stimulus is gradually increased or decreased until it is at its sensory threshold and is just detectable by the participant. In contrast, the method of constant stimuli converges on a sensory threshold by using a series of trials in which participants decide whether a stimulus was presented or not, and the experimenter varies the intensity of the stimulus. At the sensory threshold, participants are at chance of discriminating between the presence and absence of a stimulus.

Although sensory threshold procedures have been important, these methods fail to recognize the role of nonsensory factors in stimulus processing. Thus signal detection theory was developed to take into account an individual’s biases in responding to a given signal in a particular context (Green & Swets, 1966). The notion is that target stimuli produce some signal that is always available in a background of noise and that the payoffs for hits (correctly responding “yes” when the stimulus is presented) and correct rejections (correctly responding “absent” when the stimulus is not presented) modulate the likelihood of an individual reporting that a stimulus is present or absent. One example of this has been a sonar operator in a submarine hearing signals that could be interpreted as an enemy ship or background noise. Because it is very important to detect a signal in this situation, the sonar operator may be biased to say “yes” another ship is present, even when the stimulus intensity is very low and could just be background noise. This bias will not only lead to a high hit probability, but it will also lead to a high false-alarm probability (i.e. incorrectly reporting that a ship is there when there is only noise). Signal detection theory allows researchers to tease apart the sensitivity that the participant has in discriminating between signal and signal plus noise distributions (reflected by changes in a statistic called d prime) and any bias that the individual may bring into the decision making situation (reflected by changes in a statistic called beta).

Signal detection theory has been used to illustrate the independent roles of signal strength and response bias not only in perceptual experiments, but also in other domains such as memory and decision making. Consistent with the distinction between sensitivity and bias, variables such as subject motivation and the proportion of signal trials have been shown to influence the placement of the decision criterion but not the distance between the signal plus noise and noise distributions on the sensory energy scale. On the other hand, variables such as stimulus intensity have been shown to influence the distance between the signal plus noise and noise distributions but not the placement of the decision criterion.

Memory Methods

One of the first studies of human cognition was the work of Ebbinghaus (1885/1913) who demonstrated that one could experimentally investigate distinct aspects of memory. One of the methods that Ebbinghaus developed was the savings-in-learning technique in which he studied lists of nonsense syllables (e.g. puv) to a criterion of perfect recitation. Memory was defined as the reduction in the number of trials necessary to relearn a list relative to the number of trials necessary to first learn a list. Since the work of Ebbinghaus, there has been considerable development in the methods used to study memory.

Researchers often attempt to distinguish between three different aspects of memory: encoding (the initial storage of information), retention (the delay between storage and the use of information), and retrieval (the access of the earlier stored information). For example, one way of investigating encoding processes is to manipulate the participants’ expectancies. In an intentional memory task, participants are explicitly told that they will receive a memory test. In contrast, during an incidental memory test, participants are given a secondary task that may vary with respect to the types of processes engaged (e.g. making a deep semantic decision about a word versus simply counting the letters). Hyde and Jenkins (1969) found that both the intentionality of learning and the type of encoding during incidental memory tasks influenced later memory performance.

Studies of the retention of information most often involve varying the delay between study and test to investigate the influences of the passage of time on memory performance. However, researchers soon realized that it is not simply the passage of time that is important but also what occurs during the passage of time. In order to address the influence of interfering information, researchers developed retroactive interference paradigms, in which the similarity of the information presented during a retention interval was manipulated. Results from such studies indicate that interference is a powerful modulator of memory performance (see Anderson & Neely, 1996, for a review).

There are two general classes of methods used in memory research to tap into retrieval processes. On an explicit memory test, the participants are presented a list of materials during an encoding stage, and at some later point in time they are given a test in which they are asked to retrieve the earlier presented material. There are three common measures of explicit memory: recall, recognition, and cued recall. During a recall test (akin to a classroom essay test), participants attempt to remember material presented earlier either in the order that it was presented (serial recall) or in any order (free recall). Researchers often compare the order of information during recall to the initial order of presentation (serial recall functions) and also the organizational strategies that individuals invoke during the retrieval process (measures of subjective organization and clustering). In order to investigate more complex materials such as stories and discourse processing, researchers sometimes measure the propositional structure of the recalled information. The notion is that in order to comprehend a story, individuals rely on a network of interconnected propositions. A proposition is a flexible representation of a sentence that contains a predicate (e.g. an adjective or a verb) and an argument (e.g.. a noun or a pronoun). By looking at the recall of the propositions, one can provide insights into the representation that the individuals may have gleaned from a story (Kintsch & van Dijk. 1978).

Of course, there may be memories available that the individual may not be able to produce in a free recall test. Thus, researchers sometimes employ a cued recall test, which is quite similar to free recall, with the exception that the participant is provided with a cue at the time of recall that may aid in the retrieval of the information that was presented earlier. In a recognition task (akin to a classroom multiple choice test), participants are given the information presented earlier and are asked to discriminate this information from new information. The two most common types of recognition tests are the forced choice recognition test and the free choice or yes/no recognition test. On a forced choice recognition test, a participant chooses which of two or more items is old. On a yes/no recognition test, a participant indicates whether each item in a large set of items is old or new.

A second general class of memory tests has some similarity to Ebbinghaus’s classic savings method. These are called implicit memory tests. The distinguishing aspect of implicit tests is that participants are not directly asked to recollect an earlier episode. Rather, participants are asked to engage in a task where performance often benefits from earlier exposure to the stimulus items. For example, participants might be presented with a list of words (e.g. elephant, library, assassin) to name aloud during encoding, and then later they would be presented with a list of word fragments (e.g., _le_a_t) or word stems (e.g. ele_) to complete. Some of these fragments or stems might reflect earlier presented items while others may reflect new items. In this way, one can measure the benefit (also called priming) of previous exposure to the items compared to novel items. Interestingly, amnesics are often unimpaired in such implicit memory tests, while showing considerable impairment in explicit memory tests, such as free recall.

Chronometric Methods

In addition to relying on experiments to discriminate among mental operations, cognitive psychologists have attempted to provide information regarding the speed of mental operations. Interestingly, this work began over a century ago with the work of Donders (1868/ 1969) who was the first to use reaction times to measure the speed of mental operations. In an attempt to isolate the speed of mental processes, Donders developed a set of response time tasks that would appear to differ only in a simple component of processing. For example, task A might require Process 1 (stimulus encoding), whereas, task B might require Process 1 (stimulus encoding) and Process 2 (binary decision). According to Donder’s subtractive method, cognitive operations can be added and removed without influencing other cognitive operations. This has been referred to as the assumption of pure insertion and deletion. In the previous example, the duration of the binary decision process can be estimated by subtracting the reaction time in task A from the reaction time in task B.

Sternberg (1969) pointed out that the pure insertion assumptions of subtractive factors have some inherent difficulties. For example, it is possible that the speed of a given process might change when coupled with other processes. Therefore, one cannot provide a pure estimate of the speed of a given process. As an alternative, Sternberg introduced additive factors logic. According to additive factors logic, if a task contains distinct processes, there should be variables that selectively influence the speed of each process. Thus, if two variables influence different processes, their effects should be statistically additive. However, if two variables influence the same process, their effects should statistically interact. In this way, additive factor methods allow one to use studies of response latency to provide information regarding the sequence of stages and the manner in which such processes are influenced by independent variables.

Unfortunately, even additive factors logic has some difficulties. Specifically, additive factors logic works if one assumes a discrete serial stage model of information processing in which the output of a processing stage is not passed on to the next stage until that stage is complete. However, there is a second class of models that assume that the output of a given stage can begin exerting an influence on the next stage of processing before completion. These are called cascade models to capture the notion that the flow of mental processes (like a stream over multiple stones) can occur simultaneously across multiple stages. McClelland (1979) has shown that if one assumes a cascade model, then one cannot use additive factors logic to unequivocally determine the locus of the effects of independent variables.

One cannot consider reaction time measures without considering accuracy because there is an inherent tradeoff between speed and accuracy. Specifically, most behaviors are less accurate when completed too quickly (e.g., consider the danger associated with driving too fast. or the errors associated with solving a set of arithmetic problems under time demands). Most chronometric researchers attempt to ensure that accuracy is quite high, most often above 90% correct, thereby minimizing the concern about accuracy. However, Pachella (1974) has developed an idealized speed-accuracy tradeoff function that provides estimates of changes in speed across conditions and how such changes might relate to changes in accuracy. The importance of Pachella’s work is that at some locations of the speed-accuracy tradeoff function, very small changes in accuracy can lead to large changes in response latency and vice versa. More recently, researchers have capitalized on the relation between speed and accuracy to empirically obtain estimates of speed-accuracy functions across different conditions. In these deadline experiments, participants are given a probe that signals the participant to terminate processing at a given point in time. By varying the delay of the deadline, one can track changes in the speed-accuracy function across conditions and thereby determine if an effect of a variable is in encoding and/or retrieval of information (see Meyer, Osman, Irwin, & Yantis, 1988, for a review).

It is important to note that although the temporal dynamics of virtually all cognitive processes can (and probably should) be measured, studies of attention and language processing are the areas that have relied most heavily on chronometric methods. For example, in the area of word recognition, researchers have used the lexical decision task (participants make word/nonword judgments) and speeded naming performance (speed taken to begin the overt pronunciation of a word) to develop models of word recognition. These studies have looked at variables such as the frequency of the stimulus (e.g., orb versus dog), the concreteness of the stimulus (e.g.. faith versus truck), or the syntactic class (e.g .. dog versus run). In addition, eye-tracking methods have been developed that allow one to measure how long the reader is looking at a particular word (e.g., fixation and gaze measures) while they are engaged in more natural reading. Eye-tracking methods have allowed important insights into the semantic and syntactic processes that modulate the speed of recognizing and integrating a word with other words in the surrounding text.

Researchers in the area of attention have also relied quite heavily on speeded tasks. For example, two common techniques in attention research are interference paradigms and cueing paradigms. In interference paradigms, at least two stimuli are presented that compete for output. A classic example of this is the Stroop task in which a person is asked to name the ink color of a printed word. Under conditions of conflict, that is, when the word green is printed in red ink, there is a considerable increase in response latency compared to nonconflict conditions (e.g., the word deep printed in red ink). In the second class of speeded attention tasks, individuals are presented with visual cues to orient attention to specific locations in the visual field. A target is either presented at that location or at a different location. The difference in response latency to cued and uncued targets is used to measure the effectiveness of the attentional cue.

Cross-Population Studies

Although cognitive psychologists rely most heavily on college students as their target sample, there is an increasing interest in studying cognitive operations across quite distinct populations. For example, there are studies of cognition from early childhood to older adulthood that attempt to trace developmental changes in specific operations such as memory, attention, and language processing. In addition, there are studies of special populations that may have a breakdown in a particular cognitive operation. Specifically, there has been considerable work attempting to understand the attentional breakdowns that occur in schizophrenia and the memory breakdowns that occur in Alzheimer’s disease. Thus, researchers have begun to explore distinct populations to provide further leverage in isolating cognitive activity.

Case Studies

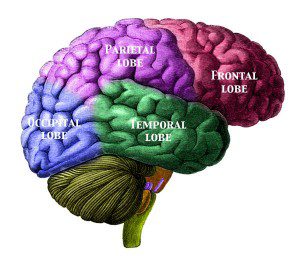

After a trauma to the brain, there are sometimes breakdowns in apparently isolated components of cognitive performance. Thus, one may provide insights into the cognitive architecture by studying these individuals and the degree to which such cognitive processes are isolated. For example, there is the classic case of H M in memory research. H M are the initials of an individual who, because of an operation to relieve epilepsy, acquired severe memory loss on explicit tests, although performance on implicit memory tests was relatively intact. In addition, there are classic dissociations across individuals with different types of language breakdowns. For example, Broca’s aphasics have relatively spared comprehension processes but difficulty producing fluent speech. In contrast, Wernicke’s aphasics have impaired comprehension processes but relatively fluent speech production.

Measures of Brain Activity

With the increasing technical sophistication from the neurosciences, there has been an influx of studies that measure the correlates of mental activity in the brain (Posner & Raichle, 1994). Although there are other methods that are available, we will only review the three most common here. The first is the evoked potential method. In this method, the researcher measures the electrical activity of systems of neurons (i.e. brain waves) as the individual is engaged in some cognitive task. This procedure has excellent temporal resolution, but the specific locus in the brain that is producing the activity can be relatively equivocal.

An approach that has much better spatial resolution is positron emission tomography (PET). In this approach, the individual receives an injection of a radioactive isotope that emits signals that are measured by a scanner. The notion is that there will be increased blood flow (which carries the isotope) to the most active areas of the brain. In this way, one can isolate mental operations by measuring brain activity under specific task demands. Typically, these scans involve about a minute of some form of cognitive processing (e.g., generating verbs to nouns), which is compared to other scans that involve some other cognitive process (e.g., reading nouns aloud). Given the window of time necessary for such scans, the PET approach has some obvious temporal limitations.

A third more recent approach is functional magnetic resonance imaging (fMRI). This procedure is less invasive because it does not involve a radioactive injection. Moreover, there has been some progress made in this area, which suggests that one can look at a more fine-grained temporal resolution in fMRI, at least compared to PET techniques. Ultimately, the wedding of evoked potential and fMRI signals may provide the necessary temporal and spatial resolution of the neural signals that underlie cognitive processes.

Computational Modeling

Most models of cognition, although grounded in the experimental method, are metaphorical and noncomputational in nature, e.g.. short-term versus long-term memory stores. However, there is also an important method in cognitive psychology that uses computationally explicit models. One example of this approach is connectionist/neural network modeling in which relatively simple processing units are often layered in a highly interconnected network (Rumelhart & McClelland. 1986). Activation patterns across the simple processing units are computationally tracked across time to make specific predictions regarding the effects of stimulus and task manipulations. Computational models are used in a number of ways to better understand the cognitive architecture. First, these models force researchers to be very explicit regarding the underlying assumptions of metaphorical models. Second, these models often can be used to help explain differences across conditions. Specifically, if a manipulation has a given effect in the data, then one may be able to trace that effect in the architecture within the model. Third, these models can provide important insights into different ways of viewing a given set of data. For example, as noted earlier. McClelland (1979) demonstrated that cascadic models can handle data that were initially viewed as supportive of serial stage models.

Summary

The goal of this article is to provide an encapsulated review of some of the methods that cognitive psychologists use to better understand mental operations. Researchers in cognitive psychology have developed a set of research tools that are almost as rich and diverse as cognition itself.

See also: