Validation Definition

In the broadest sense, validation refers to the process of establishing the truth, accuracy, or soundness of some judgment, decision, or interpretation. In industrial and organizational psychology, validation generally focuses on the quality of interpretations drawn from psychological tests and other assessment procedures that are used as the basis for decisions about people’s work lives. Before discussing validation specifically, it is necessary to clarify some concepts that are integral to the process of decision making based on psychological testing.

Defining the Focus of Validation

It is important to realize that validity is not a characteristic of a test or assessment procedure but of the inferences and decisions made from test or assessment information. Validation is the process of generating and accumulating evidence to support the soundness of the inferences made in a specific situation. Logically, therefore, to examine the concept of validation, it is important to specify (a) the types of inferences involved in applied assessment situations and (b) the nature of evidence that can be used to support such inferences. Different validation strategies reflect different ways to gather and examine the evidence supporting these important inferences.

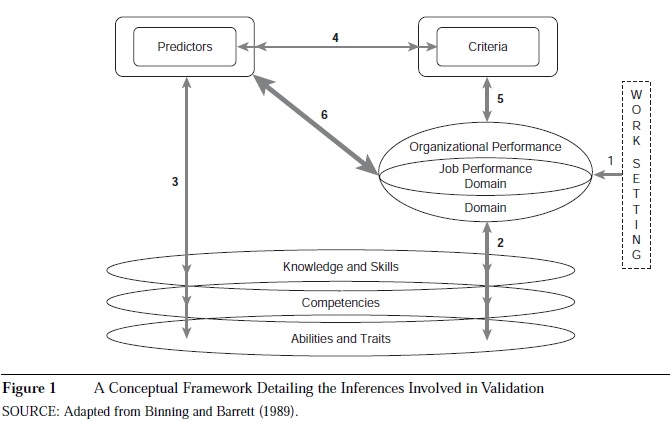

Applied psychological assessment involves a series of professional activities. A general characterization of these activities includes the following steps: (a) analysis of a work setting to determine (b) the important task and organizational behaviors (and subsequent outcomes) composing a performance domain, which then guide (c) the selection or development of certain assessment procedures (predictors), which make possible (d) predictions about the likelihood that assessees will exhibit important behaviors, and then subsequently (e) measuring individual work behavior using some operational criterion measure. This process implies a conceptual framework, which is presented in Figure 1.

This framework comprises the following inferences:

- Inference 1. The analysis of the work setting yields an accurate description of performance.

- Inference 2. The construct domains tapped by the predictor overlap with the performance domains.

- Inference 3. The predictors are adequate samples of relevant psychological construct domains.

- Inference 4. Predictor scores relate to operational criterion measurements.

- Inference 5. The operational criterion measures adequately sample from the performance domains.

- Inference 6. The predictors relate to the performance domains.

The analysis of a work setting generates a conception of desired performance, or a performance domain. Performance domains are clusters of work activities and outcomes that are especially valued by an organization. Selection decisions based on psychological assessment represent attempts to identify regularities in work behavior—but only those behaviors that are identified by the organization as relevant for goal attainment. Personnel selection, then, is the process of identifying and mapping predictor samples of behavior to effectively overlap with performance domains. Validity can be described as the extent to which the predictor sample meaningfully overlaps with the performance domain.

Validation is the process of generating evidence that the inferences drawn from assessment information are accurate. Inference 6 is the keystone inference in applied decision making because it represents whether a specific assessment process yields information that makes possible the prediction of important organizational outcomes. Inference 6 cannot be tested directly because it links predictor measurements with performance domains that are hypothetical domains of idealized work behavior. However, Inference 6 is tied in closed logical loops to other inferences in the framework, and therefore these other inferences play a role in validation. If certain inferences (which will be discussed in the next section) can be substantiated with sufficient evidence, then Inference 6, by implication, is substantiated. To put it another way, validation is the process of generating evidence to support Inference 6, and this involves supporting the other inferences in the framework.

Of course, many specific forms of Inference 6 may occur in a given decision situation, and the evidence needed to validate each may differ. For example, the Minnesota Multiphasic Personality Inventory (MMPI) could be administered to police candidates to predict who is more likely to possess antisocial tendencies on the job. This form of Inference 6 would require different validity evidence than if the MMPI were used to predict who is likely to experience debilitating anxiety on the job. Regardless of the specific nature of the decisions being made, there are three general approaches to generating validity evidence, and within each approach, there are specific strategies for generating and interpreting this evidence.

Three General Approaches to Validation

There are three broad categories of validity evidence, often labeled criterion-related, construct-based, and content-based strategies. This trilogy of validity terms was first articulated in the Technical Recommendations for Psychological Tests and Diagnostic Techniques, published by the American Psychological Association, American Educational Research Association, and National Council of Measurement Used in Education in 1954. This trilogy can be usefully viewed as three broad strategies for generating validity evidence.

Criterion-Related Validation Strategies

One general approach to justifying Inference 6 would be to generate direct empirical evidence that predictor scores relate to accurate measurements of job performance. Inference 4 represents this linkage, which historically has been of special pragmatic concern to selection psychologists because of the allure of quantitative indexes of test-performance relationships. Any given decision situation might employ multiple predictors and multiple criterion measures, so numerous relationships can be specified.

The most common form of criterion-related validity evidence is correlational. This type of evidence is generated by statistically correlating predictor scores with criterion scores. The size and direction of predictor-criterion correlation coefficients are statistical indexes of the relationship between the predictor and the criterion. However, this correlation supports Inference 6 only if the criterion measure adequately taps the relevant performance domain. To have complete confidence in the validity of Inference 6, both Inferences 4 and 5 must be justified. An examination of Figure 1 shows that validating Inferences 4 and 5, by implication, validates Inference 6 because they complete a closed logical loop.

Criterion-related validity evidence is generally correlational because selection decisions are generally based on individual differences that are not amenable to experimental manipulation. This evidence is generally collected from current employees through a concurrent validation study or from job applicants through a predictive validation study, and many specific methodological variations exist for collecting the predictor and criterion data. Other strategies for generating empirical data relevant to Inferences 4 and 5 include experimental and quasi-experimental research. For example, identifying specific groups of applicants who are expected to differ on some predictive characteristic, then determining whether meaningful criterion differences exist, is an alternative to classic correlational research. Some predictive characteristics can be manipulated in field or laboratory experiments, and this research can also provide criterion-related validity evidence.

Construct-Based Validation Strategies

What selection psychologists have traditionally implied by the label construct validity is tied to Inferences 2 and 3. It can be assumed that if

Inferences 2 and 3 can be supported by sound evidence, then one can confidently believe Inference 6 to be true—again, because they form a closed logical loop. If it can be shown that a test measures a specific psychological construct (Inference 3) that has been determined to be critical for job performance (Inference 2), then inferences about job performance from test scores (Inference 6) are logically justified. Of course, in any given decision situation, multiple psychological constructs may be thought to underlie performance (variations of Inference 2) and subsequently may be assessed (variations of Inference 3), and each of these requires validity evidence.

How does a selection psychologist support Inferences 2 and 3? Evidence supporting Inference 3 primarily takes the form of empirically demonstrated relationships and judgments that are both convergent and discriminant in nature. Convergent

evidence exists, for example, when (a) predictor scores relate to scores on other tests of the same construct, (b) predictor scores from people who are known to differ on the focal construct also differ in other predictable ways, or (c) predictor scores relate to scores on tests of other constructs that are theoretically expected to be related. Discriminant evidence exists when predictor scores do not relate to scores on tests of theoretically independent constructs. The process of developing and researching measures of psychological constructs, then refining them to ensure that they are measuring the constructs we think they are measuring, is a scientific process that is central to the development of psychological theories of individual differences.

Because it links two hypothetical behavioral domains, Inference 2 cannot be examined empirically, at least not in the form of actual behavioral measurements. Rather, informed judgments about performance domains and psychological construct domains are required. Inference 2 must be justified theoretically and logically on the basis of accumulated scientific knowledge of relationships between performance domains and psychological construct domains. A common basis for linking these two is systematic job analysis or competency modeling, which produces job specifications in the form of knowledge, skill, ability, and other constructs required for job performance.

Inference 2 involves the translation of work behavior into psychological construct terms. This is often done in a relatively unstructured way, relying heavily on the work analyst’s qualitative judgments about performance domain-psychological construct relations.

However, some job analysis and competency modeling methods explicitly structure the specification of behaviors in the performance domain and the overlap with psychological construct domains. Regardless of how Inference 2 is substantiated, the extent to which this process is viewed as professionally and scientifically credible, and whether it accompanies sound evidence for Inference 3, the validity of Inference 6 is enhanced.

Content-Based Validation Strategies

A third general approach to justifying Inference 6 involves demonstrating that the predictor samples behavior that is isomorphic with the behaviors composing the performance domain. This line of reasoning is particularly defensible when one realizes that predictor tests are always samples of behavior from which we infer something about behavior on a job. The behaviors sampled may be dissimilar (e.g., scores from the Rorschach inkblot test) or similar (e.g., scores from a work sample simulation test) to the work behaviors being predicted. If an applicant performs behaviors as part of the assessment phase that closely resemble behaviors in the performance domain, then logically Inference 6 is better justified. This line of reasoning underlies the type of evidence that is traditionally labeled contentvalidity. Specific procedures for analyzing the degree of isomorphism between predictors and criteria have been proposed, but the same basic logic underlies each.

Content-related evidence of validity involves justifying Inference 6 by examining the manner in which the predictor directly samples the performance domain. Here, the predictor is examined as a sample from the performance domain rather than a sample from an underlying psychological construct domain. As in statistical sampling theory, if a predictor sample is constructed in congruence with certain principles (e.g., ensuring representativeness as well as relevance of the sample), one can assume that scores from that sample will accurately estimate the universe from which the sample is drawn. Therefore, when a selection psychologist can rationally defend the strategy for sampling the performance domain used in a given testing situation, content validity evidence supports the inference that scores from the test are valid for predicting future performance (i.e., Inference 6).

The logical assumption of content-based validation is that if a job applicant performs desired behaviors at the assessment phase, he or she can perform those behaviors on the job. Of course, many predictive difficulties arise when one considers the complexity of human motivation in this context. Evidence that someone can perform behaviors in one situation does not necessarily indicate that he or she will perform them in a particular work situation. These complexities notwithstanding, judgments and data that are relevant to whether a predictor domain directly maps onto the performance domain are the core of content-based validation strategies.

Many specific methodologies exist to guide judgments about behavioral domain overlap. Some of these methods involve having subject matter experts systematically examine performance domains and individually rate the extent to which each predictor element is relevant to the performance domain. Such structured and quantitative content-based methods can yield credible evidence about whether a predictor is likely to predict performance.

Validation Strategies as Predictor-Development Processes

Thus far, the concepts of construct-based, content-based, and criterion-related evidence have been discussed as general strategies that can be used to justify decision validity. However, the implications of differences among the three can be traced back in the decision-making process. By doing so, their differences can be more clearly appreciated.

Selection decision making involves two fundamental phases: (a) constructing the predictor as a sample of some behavioral domain and (b) using this behavioral information to make predictions about future job behavior. This latter data combination phase is the immediate precursor to employment decisions and therefore has received considerable legal and professional scrutiny. Yet the data-collection phase, which involves specifying the behavioral database, has equally important implications for validity.

To briefly review Figure 1, the development of any personnel-selection system begins with the delineation of the relevant performance domain. From this delineation of desirable job behaviors and outcomes, selection psychologists determine which construct domains should be sampled by the predictors. There are three routes from the performance domain to predictor development: The construct-based approach involves identifying psychological construct domains that overlap significantly with the performance domain (Inference 2) and then developing predictors that adequately sample these construct domains (Inference 3). The content-based approach involves developing predictors that directly sample the performance domain. The criterion-related approach involves developing some operational measure of behaviors in the performance domain (Inference 5) and then identifying or developing predictors that will relate empirically with the operational criterion measure (Inference 4). Of course, all of these depend on the accuracy with which the performance domain has been delineated (Inference 1).

There is a fundamental difference between the criterion-related approach and the other two approaches. A criterion measure is merely an operational sample of the performance domain. Predictor-criterion relationships must result from either the operation of psychological constructs or the sampling of behaviors that are especially similar to those in the performance domain. From this perspective, the construct-based and content-based approaches represent the two fundamental predictor sampling strategies. Construct-based implies that predictor sampling is guided by evoking a psychological construct domain. Content-based implies that predictor sampling is guided by evoking a performance domain. To the extent that the two domains are derived differently and relations between the two are not well understood, construct-and content-based approaches can lead to substantive differences in predictor development and decision validity. In contrast to the construct-and content-based approaches, the criterion-related approach is best characterized as a general research strategy for empirically assessing the quality of the two fundamental predictor sampling strategies. Judgments of validity are tantamount to judgments about the adequacy of behavior sampling (construct- and content-based) or direct empirical indexes of such adequacy (criterion-related).

Validating Employment Decisions

Recall that validation is the process of generating and accumulating evidence to support the soundness of the inferences made in a specific situation. Three general approaches to compiling evidence have been discussed, as well as their relative strengths and weaknesses. The convenience of discussing these strategies separately should not cloud a very important point: Validity is a unitary concept. Specific decisions or uses of psychological test information are either valid or not, and there are different forms of evidence relevant to the determination of validity.

The validity of a decision can be reasonably compared to the guilt or innocence of a defendant in a court case. When a trial begins, we generally do not know whether the defendant is guilty or innocent. The trial unfolds as a forum for presenting evidence collected during an investigation. Some evidence is direct and very compelling, whereas other evidence is circumstantial and open to skepticism. Some attorneys are better able to communicate the evidence, and juries are more or less able to grasp the complexities of the evidence. Validation parallels this characterization. We seldom know whether a selection decision (derived from a specific assessment process) is valid at the time it is made. However, we can anticipate needing to justify the decision process, so we investigate the situation and gather evidence of various forms, direct and circumstantial, to support a claim of validity. Ultimately, our validation efforts are reviewed by people who judge their credibility and deem the process to be sufficiently valid or not.

Conclusion

Validation, as a systematic process of generating and examining evidence, is the essence of scientific research and theoretical development, and its goal is to more fully understand and explain human functioning in work settings. There is no inherent superiority of one type of validity evidence over other lines of evidence. From this perspective, all validity evidence may be relevant to determining decision quality. Competently conducted validation efforts are ones that comprehensively generate credible information about all of the inferential linkages that compose the current framework and focus appropriate attention on predictor content, psychological constructs, and empirically demonstrated relationships.

References:

- Binning, J. F., & Barrett, G. V. (1989). Validity of personnel decisions: A conceptual analysis of the inferential and evidential bases. Journal of Applied Psychology, 74, 478-494.

- Cronbach, L. J. (1988). Five perspectives on validity argument. In H. Wainer & H. Braun (Eds.), Test validity (pp. 34-35). Hillsdale, NJ: Lawrence Erlbaum.

- Cronbach, L. J., & Meehl, P. E. (1955). Construct validity in psychological tests. Psychological Bulletin, 52, 281-302.

- Equal Employment Opportunity Commission. (1978). Uniform guidelines on employee selection procedures. Federal Register, 43(166), 38295-38309.

- Guion, R. M. (1998). Assessment, measurement, and prediction for personnel decisions. Mahwah, NJ: Lawrence Erlbaum.

- Hoffman, C. C., Holden, L. M., & Gale, K. (2000). So many jobs, so little “N”: Applying expanded validation models to support generalization of cognitive test validity. Personnel Psychology, 53, 955-992.

- Lawshe, C. H. (1975). A quantitative approach to content validity. Personnel Psychology, 28, 563-575.

- Lubinski, D. (2000). Scientific and social significance of assessing individual differences: Sinking shafts at a few critical points. Annual Review of Psychology, 51, 405-444.

- Messick, S. (1981). Constructs and their vicissitudes in educational and psychological measurement. American Psychologist, 89, 575-588.

- Messick, S. (1995). Validity of psychological assessment: Validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. American Psychologist, 50, 741-749. Nunnally, J. C., & Bernstein, I. H. (1994). Psychometric theory (3rd ed.). New York: McGraw-Hill.

- Sussmann, M., & Robertson, D. U. (1986). The validity of validity: An analysis of validation study designs. Journal of Applied Psychology, 71, 461-468.